CLAS Professor Reveals the Science of Political Surveys

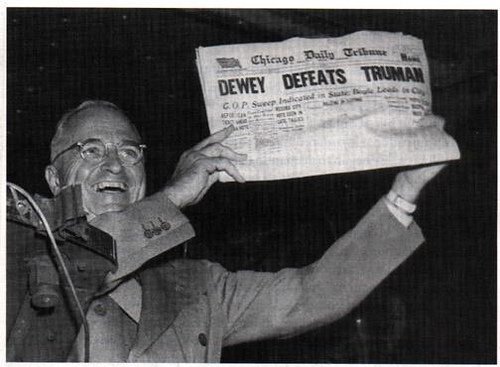

You might remember from a long-ago history class that in 1948, a young pollster named George Gallup found that Gov. Thomas Dewey won the presidential election—a prediction featured dramatically on the Chicago Tribune’s front page. Harry Truman, as we know, actually won.

What went wrong?

Gallup began polling representative samples of the population in 1936 using in-person interviewers, who would knock on doors and ask people who they were likely to vote for. What he didn’t account for was basic human bias—interviewers were more likely to visit houses in neighborhoods they felt comfortable in, asking people who looked like them. Furthermore, results were mailed in, meaning that polling stopped two weeks before the election and didn’t account for late deciders.

Gallup began polling representative samples of the population in 1936 using in-person interviewers, who would knock on doors and ask people who they were likely to vote for. What he didn’t account for was basic human bias—interviewers were more likely to visit houses in neighborhoods they felt comfortable in, asking people who looked like them. Furthermore, results were mailed in, meaning that polling stopped two weeks before the election and didn’t account for late deciders.

While pollsters have since corrected for these errors—interviewing is now much more likely to take place on the phone or the Internet using random sampling, for example—this shows you can’t always take all political polls at face value.

That was the scope of UConn public policy professor Jennifer Dineen’s CLAS College Experience session. The CLAS College Experience, which this semester took place during Huskies Forever Weekend, gives alumni the chance to head back to the classroom to learn from UConn professors in the liberal arts and sciences.

“Caller ID, cell phones, and do-it-yourself survey tools have greatly affected polling,” said Dineen. “We’re now being inundated with surveys. There have been significant changes to the media too—the cycle is much shorter now, there are 24-hour content needs, and shifting budgets.” As a result, news outlets are often pressured to conduct quick-hit polls on their websites, or use surveys that are less rigorous in their methodology.

So what questions should you ask when you see political poll results?

- Who conducted the survey—and who paid for it? Some polls are known to have a bias in favor of one political party or another. “I have no incentive other than to be right,” said Dineen, “but other organizations might have other goals.”

- How was it conducted? Was it a live poll or conducted by interactive voice response (IVR), otherwise known as “robocall”? IVR polls, Dineen found, have fewer undecided voters than live surveys; live surveys tend more Democratic.

- Who was polled—and when? Polling the general public might make sense 18 months before the election, when people are just starting to think about voting, but good pollsters narrow the field to registered voters as the timeframe narrows—and to likely voters when there are only weeks or days left before Election Day. Other effects—conventions, scandals, national crises—might impact the results of political surveys, too.

- How long was data collected? Computers can now not only generate random phone numbers; they can also apply an algorithm to call times so that if you’re not home during dinner on Tuesday, you’ll get flagged to get another call Saturday afternoon. But a daily tracking poll doesn’t account for that, so the sample may be skewed.

- How were the questions asked? “Even if you ask the right questions, if you ask them the wrong way, your efforts will be for naught,” said Dineen. Good pollsters switch the order in which they list candidates and ask similar questions during the same survey to ensure a respondents’ answers make sense.

- Can I find information on how the poll was conducted? All credible polling organizations will give details of how the surveys were conducted—who was surveyed, what questions specifically they asked, and what their margin of error was.

Keep in mind, too, that even the most rigorous and well-regarded pollsters can produce varying results. This could be due to errors in population sampling, analysis based on potential political bias, or other factors. Most polls are 95% confident in their results. So to get a better idea of who’s ahead you could refer to poll aggregators like FiveThirtyEight or Real Clear Politics—or better yet, take a breather from relentless daily poll results and check in every week or so.

Want more insight from the UConn community on the 2016 election?

- Take a look at Professor Charles R. Venator-Santiago’s take on how Decision 2016 will impact immigration reform

- Discover why how you process information will affect how you vote

- See how a rare flag collection, currently on display at the Benton Museum, reveals that this election isn’t as unusual as you’d think

- Take solace in the fact that, thanks to a Templeton Foundation grant, UConn is leading the way in restoring civility to political discourse